Introduction

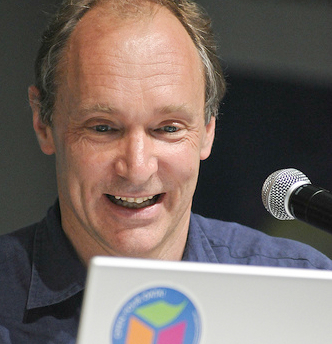

Tim Berners-Lee, the main architect of the World Wide Web (W3), developed the system while working for CERN, the European Organisation for Nuclear Research in the late 1980s. W3 was developed to overcome difficulties with managing information exchange via the Internet. At the time, finding data on the Internet required pre-existing knowledge gained through various time-consuming methods: the use of specialised clients, mailing lists, newsgroups,hard copies of link lists, and word of mouth.

At CERN, a large number of physicists and other staff needed to share large amounts of data and had begun to employ the Internet to do this. Although the Internet was acknowledged as a valuable means of sharing data, towards the end of the 1980s the need to develop simpler, more reliable methods encouraged the creation of new protocols using distributed hypermedia as a model.

Developments in Open Hypermedia Systems (OHSS) had gained pace throughout the 80s; a number of stand-alone systems had been prototyped and early attempts at a standardised vocabulary had been made [1]. OHSS facilitate key features: a separation of link databases (‘linkbases’) from documents, and hypermedia functions enabled for third party applications with potential accessibility within heterogeneous environments.

Two key systems; Hyper-G, developed by a team at the Technical University of Graz, Austria [1], and Microcosm, originating at the University of Southampton [5] were at the heart of pioneering approaches to hypermedia. Like W3, they were launched in 1990, but within 10 years both were outpaced by the formers overwhelming popularity. Ease of use, the management of link integrity and content reference, and the ‘openness’ of the underlying technology were contributing factors to W3’s success. However, both Hyper-G’s and Microcosm’s approach to linking media continue to have relevance for the future development of the Web.

The Dexter Hypertext Reference Model

In 1988 a group of hypertext developers met at the Dexter Inn, New Hampshire to create a terminology for interchangeable and interoperable hypertext standards. About 10 different contemporary hypertext systems were analysed and commonalities between them were described. Essentially each of the systems provided “the ability to create, manipulate, and/or examine a network of information-containing nodes interconnected by relational links.”[6]

The Dexter Model did not attempt to specify implementation protocols, but provided a vital reference model for future developments of hypertext and hypermedia. The Model identified a ‘component’ as a single presentation field which contained the basic content of a hypertext network: text, graphics, images, and/or animation. Each component was assigned a ‘Unique Identifier’ (UID), and ‘links’ that interconnected components were resolved to one or many UIDs to provide ‘link integrity’.

The World-Wide Web

By the mid-80s Berners-Lee saw the potential for extending the principle of computer-based information management across the CERN network in order to provide access to project documentation and make explicit the ‘hidden’ skills of personnel as well as the ‘true’ organisational structure. He proposed that this system should meet a number of requirements: remote access across networks, heterogeneity, and the ability to add ‘private links’ and annotations to documents. Berners-Lee’s key insights were that ”Information systems start small and grow”, and that the system must be sufficiently flexible to “allow existing systems to be linked together without requiring any central control or coordination”.

His proposal also stressed the different interests of “academic hypertext research” and the practical requirements of his employer. He recognised that many CERN employees were using “primitive terminals” and were not concerned with the niceties of “advanced window styles” and interface design [2].

Towards the end of 1990, work was completed on the first iteration of W3, which included a new Hypertext Markup Language (HTML), an ‘httpd’ server, and the Webs first browser, which included an editor function as well as a viewer. The underlying protocols were made freely available and within a few years the technology had been used and adapted by a wide variety of Internet enthusiasts who helped to spread W3 technology to wider audiences.

Microcosm

Aimed at providing solutions to perceived problems in contemporary hypermedia systems, Microcosm was launched as an “open model for hypermedia with dynamic linking” [5] in January 1990. The Microcosm team identified that existing hypermedia systems, although useful in closed settings, did not communicate with other applications, used proprietary document formats, were not easily authored, and as they were distributed on read-only media, did not allow users to add links and annotations.

While Microcosm used read-only media (CD-ROMs and laser-discs) to host components within an authored environment, it separated these ‘data objects’ from linkbases housed on remote servers. This local area network-based system allowed all users, authors and readers, to add advanced, n-ary (multi-directional) links to multiple generic objects. Microcosm was also able to process a range of documents and had some potential for interoperability due its modular structure, which enabled it to offer a degree of interoperability with W3 browsers [7].

While recognising the significance of W3, the Microcosm team identified some weakness, especially in the manner HTML managed links. Rather than storing links separately, W3 embedded links in documents which resulted in the inability to annotate or edit web documents, and suffered from ‘dangling’ or missing links when documents were deleted or URLs changed. In addition, HTML was limited in how links could be made, there were a small number of allowable tags and only single-ended, unidirectional links could be authored. To counter these link integrity issues the Microcosm team developed the Distributed Link Service (DLS) which enabled the integration of linkbase technology into a W3 environment [3].

Using the DLS, W3 servers could access linkbases and enabled user authored generic as well as specific links. Generic link authoring allows users to create links that connect any mention of phrases within sets of documents, and allows bi-directional links within documents.

Hyper-G

Hyper-G offered a number of solutions to the linking issues identified by others working in hypermedia systems development. In a similar manner to Microcosm, Hyper-G stored links in link databases. This allowed users to attach their own links to read-only documents, multiple links to documents or anchors within text or any other media object could be made, users could readily see what objects were linked to, and links could be followed backwards so users could see “what links to what”. Unlike Microcosm, the system use an advanced probabilistic flood (‘P-Flood’) algorithm which managed updates to remote documents and linkbases ensuring link integrity and consistency essentially informing links when documents have been deleted and changed.

Like W3, Hyper-G was a client-server system with its own protocol (HG-CSP) and markup language (HTF). Hyper-G browsers integrated with Internet services W3, WAIS and Gopher, supported a range of objects (text, images, audio, video and 3D environments) and integrated authoring functionality with support for collaboration.

Hyper-G was a highly advanced system that successfully applied key hypermedia principles to managing data on the Internet. As web usability expert, Jakob Nielsen asserted, it offered “some sorely needed structure for the Wild Web” [8].

Why W3 Won

Despite acknowledged limitations, W3 retained its position as the defacto means of traversing the Internet, and continued to grow and spread its influence. The reasons for this are relatively straightforward.

W3 was free and relatively easy to use; anyone with a computer, a modem and a phone line could set up their own servers, build web sites and start publishing on the Internet without having to pay fees or enter into contractual relationships.

Although limited in terms of hypermedia capability, these shortcomings were not serious enough to prevent users taking advantage of its data sharing and simple linking functions. Dangling links could be ignored, as search engines allowed users to find other resources, and improved browsers allowed users to keep track of their browsing history, and backtrack through visited pages.

In contrast, Microcosm and Hyper-G were developed, in their early stages at least, as local systems. This enabled them to employ superior technology to manage complex linking operations much more effectively than W3. However, this focus led to systems that were significantly more complex to manage than W3, and presented difficulties for scaling up to the wider Internet. In addition it was not clear which parts, if any, were free for use. Both systems promoted commercial versions early in their development which had the unintended effect of stifling adoption beyond an initial core group of users.

Future directions

W3 has developed into a sophisticated system that provides many of the functions of an open hypermedia system that were lacking in its early stages of development. Attempts to integrate hypermedia systems with W3 [3],[4],[9] and find solutions to linking and data storage issues influenced the development of the open standard Extensible Markup language (XML) and XPath, XPointer and XLink syntaxes. While HTML describes documents and the links between them, XML contains descriptive data that add to or replace the content of web documents. XPath, XPointer and XLink describe addressable elements, arbitrary ranges, and connections between anchors within XML documents respectively.

XML may be combined with Resource Description Framework (RDF) and Web Ontology Language (OWL) protocols to store descriptive data that produce web content in more useful ways than with simple HTML. These protocols allow web content to be machine-readable, allowing applications to interrogate data and automate many web activities that have previously only been executable by human readers. These protocols are seen as precursors for the ‘Semantic Web’, a new development of W3 that links data points with multi-directional relationships rather than uni-directional links to documents [10].

References

[1] Keith Andrews, Frank Kappe, and Hermann Maurer. The Hyper-G Network Information System. In J. UCS The Journal of Universal Computer Science, pages 206–220. Springer, 1996.

[2] Tim Berners-Lee. Information Management: A Proposal. CERN, 1989.

[3] Les A Carr, David C DeRoure, Wendy Hall, and Gary J Hill. The Distributed Link Service: A Tool for Publishers, Authors and Readers. 1995.

[4] Hugh Davis, Andy Lewis, and Antoine Rizk. Ohp: A Draft Proposal for a Standard Open Hypermedia Protocol (Levels 0 and 1: Revision 1.2-13th March. 1996). In 2nd Workshop on Open Hypermedia Systems, Washington, 1996.

[5] Andrew M Fountain, Wendy Hall, Ian Heath, and Hugh C Davis. Microcosm: An Open Model for Hypermedia with Dynamic Linking. In ECHT, pages 298–311, 1990.

[6] Frank Halasz, Mayer Schwartz, Kaj Grønbæk, and Randall H Trigg. The Dexter Hypertext Reference Model. Communications of the ACM, 37(2):30–39, 1994.

[7] Wendy Hall, Hugh Davis, and Gerard Hutchings. Rethinking Hypermedia: the Microcosm Approach, Volume 67. Kluwer Academic Publishers Dordrecht, 1996.

[8] Hermann Maurer. Hyperwave – The Next Generation Web Solution, Institute for Information Processing and Computer Supported Media, Graz University of Technology, [Online: http://www.iicm.tugraz.at/hgbook Accessed 5 December 2013].

[9] Dave E Millard, Luc Moreau, Hugh C Davis, and Siegfried Reich. Fohm: A Fundamental Open Hypertext Model for Investigating Interoperability Between Hypertext Domains. In Proceedings of the Eleventh ACM on Hypertext and Hypermedia, pages 93–102. ACM, 2000.

[10] Nigel Shadbolt, Wendy Hall, and Tim Berners-Lee. The Semantic Web Revisited. Intelligent Systems, IEEE, 21(3):96–101, 2006.

You must be logged in to post a comment.